📸 DeepSeek V4 Preview: Million-Token Context Window and ...

What is DeepSeek V4? — A New Paradigm in Coding AI

In February 2026, Chinese AI startup DeepSeek once again disrupted the industry with its groundbreaking new release: DeepSeek V4 — a massive language model featuring a 1-trillion-parameter architecture optimized specifically for coding tasks. While the previous V3 model achieved performance on par with GPT-4o and Claude 3.5 Sonnet at a mere $5.6 million training cost, V4 steps forward with three revolutionary architectural innovations, challenging Western AI giants head-on.

According to internal benchmarks, DeepSeek V4 achieves over 80% success on SWE-bench while delivering inference at 10x to 40x lower cost compared to Western counterparts. Set to be open-sourced under the Apache 2.0 license, it also features a consumer-friendly design—capable of running on dual RTX 4090s or a single RTX 5090.

📸 DeepSeek V4: Rumors vs Reality for the Next Big Coding Model

Three Core Architectural Innovations

📸 DeepSeek mHC Explained: How Manifold-Constrained Hyper ...

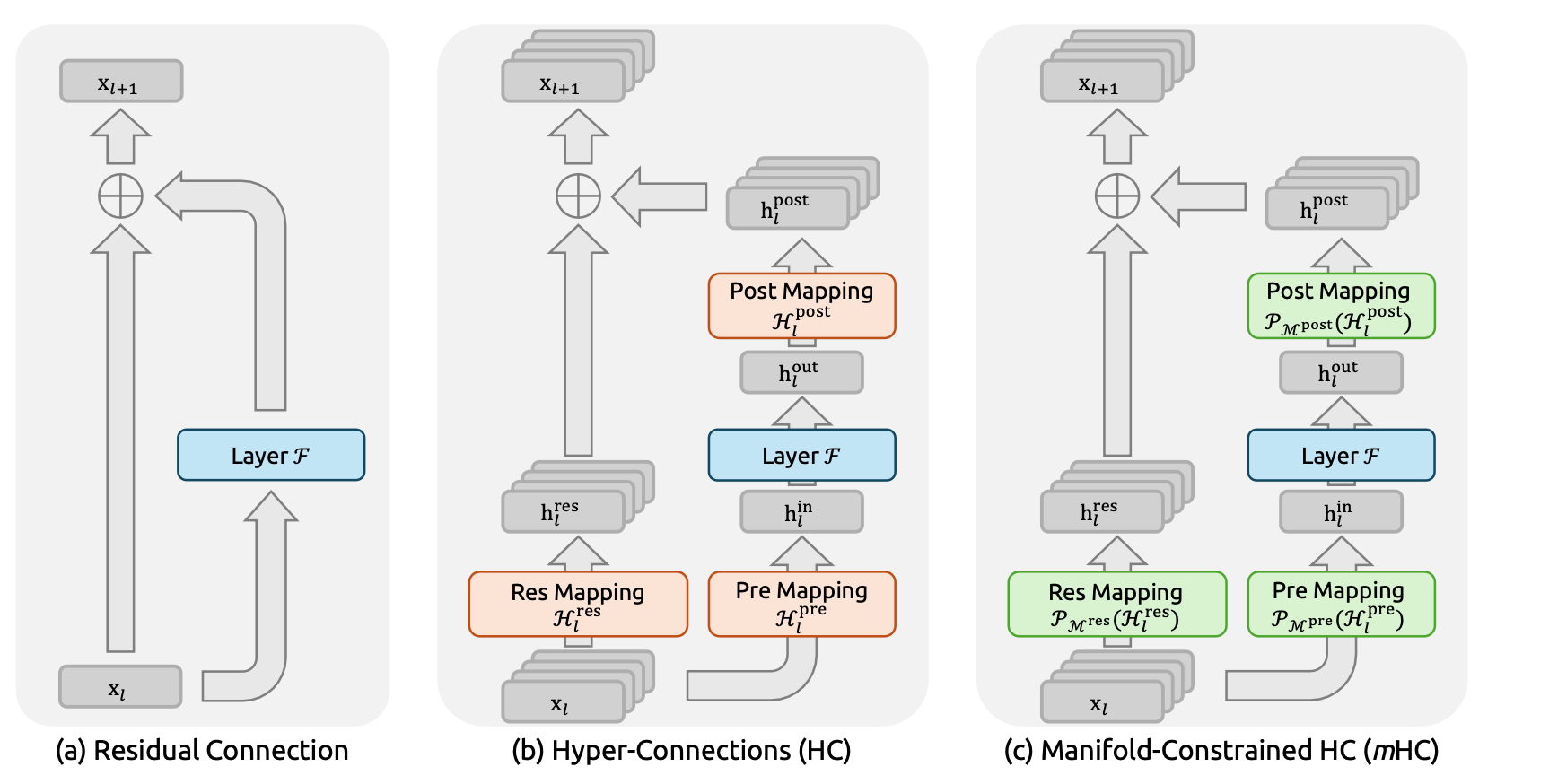

1. mHC (Manifold-Constrained Hyper-Connections)

mHC, introduced in a paper released on December 31, 2025, solves the critical signal amplification issue plaguing traditional hyper-connections. While earlier unconstrained approaches saw signal amplification as high as 3,000x, mHC uses the Sinkhorn-Knopp algorithm to limit this to just 1.6x, dramatically improving training stability at scale.

Key benchmark results:

- BBH: 43.8 → 51.0 (+7.2 points)

- DROP: 62.1 → 67.8 (+5.7 points)

- GSM8K: 71.2 → 77.3 (+6.1 points)

- MMLU: 68.4 → 73.6 (+5.2 points)

📸 Engram labelling technology and memory retrieval in ...

2. Engram Conditional Memory

Introduced on January 13, 2026, DeepSeek’s Engram technology draws inspiration from human memory mechanisms. It enables AI to selectively retain and recall information based on task context. In coding, this translates to:

- Maintaining consistent naming conventions across an entire project

- Tracking dependencies and API signatures

- Applying consistent patterns during large-scale codebase refactoring

3. DeepSeek Sparse Attention (DSA)

The most innovative component is DSA (DeepSeek Sparse Attention). It slashes computational costs by 50% compared to standard attention while supporting context windows of over one million tokens. Instead of treating all tokens equally, DSA focuses computational resources on the most contextually relevant parts.

What DeepSeek V4 Means for Developers

Full Codebase Understanding

Thanks to the 1-million-token context window, V4 can process an entire large codebase in a single pass. This goes far beyond generating snippets—it enables true multi-file reasoning:

- Understanding import/export relationships

- Tracing type definitions across modules

- Maintaining consistent API signatures

- Detecting unused code and dependencies

Multi-File Bug Fixing

One of V4’s most anticipated capabilities is autonomously diagnosing and fixing bugs that span multiple files. Without requiring developers to pinpoint issues, V4 analyzes stack traces and execution paths to propose fixes within the full system context.

Hardware Requirements

| Tier | Hardware | Use Case |

|---|---|---|

| Consumer | Dual RTX 4090 or RTX 5090 | Local development |

| Enterprise | Standard datacenter GPUs | Corporate deployment |

Comparison with Competing Models

DeepSeek V4 is designed to directly challenge these leading models:

- GitHub Copilot (powered by GPT-5.3-Codex): Similar performance on SWE-bench, but V4 outperforms in cost-efficiency

- Claude Opus 4.6: V4 emerges as a strong challenger in agent-like coding tasks

- Google Gemini 2.5 Pro: While Gemini leads in multimodal capabilities, V4 is expected to surpass it in coding specialization

Crucially, its planned open-source release under the Apache 2.0 license allows companies to freely deploy it on-premises or fine-tune it internally—a major strategic advantage.

How to Use DeepSeek V4 (Example)

// DeepSeek V4 API (OpenAI compatible)

const response = await fetch('https://api.deepseek.com/v1/chat/completions', {

method: 'POST',

headers: {

'Content-Type': 'application/json',

'Authorization': `Bearer ${process.env.DEEPSEEK_API_KEY}`

},

body: JSON.stringify({

model: 'deepseek-v4-coder',

messages: [

{

role: 'user',

content: 'Analyze this TypeScript codebase and identify all type errors'

}

],

max_tokens: 8192

})

});

Real-World Use Cases

Scenario 1: Legacy Code Refactoring

When migrating hundreds of thousands of lines of legacy JavaScript to TypeScript, V4 leverages its 1-million-token context window and Engram memory to understand global patterns and automatically generate consistent type definitions.

Scenario 2: Autonomous Bug Fixing

Integrate V4 into CI/CD pipelines: upon build failure, it can automatically analyze stack traces and create a pull request with fixes—an autonomous debugging agent.

Scenario 3: Automated Code Review

Connect V4 with GitHub Actions to deliver deep, context-aware code reviews automatically whenever a pull request is submitted.

Conclusion: Democratizing AI Coding

DeepSeek V4 is not just another coding assistant. It represents a significant leap toward a fully autonomous software engineering AI capable of understanding and managing entire software projects. Its open-source release and support for consumer hardware are set to shift AI-powered development tools from exclusive corporate assets into the hands of every developer.

The combination of training efficiency, cutting-edge architecture, and open strategy suggests DeepSeek may once again reshape the economics of the AI industry. In 2026, the race in AI coding has entered a bold new phase.

댓글

댓글 쓰기