📸 How to use Visual Intelligence on your iPhone with iOS 26 ...

What is Apple Visual Intelligence?

In February 2026, Apple CEO Tim Cook made a surprise announcement. "Our most popular feature is Visual Intelligence." Visual Intelligence is a visual AI feature that uses your iPhone's camera to recognize objects, places, and text in real-time, providing instant information and actions through AI. And now, this technology is poised to become a core feature of Apple's next-generation wearable devices, going beyond the iPhone.

📸 Use visual intelligence on iPhone - Apple Support

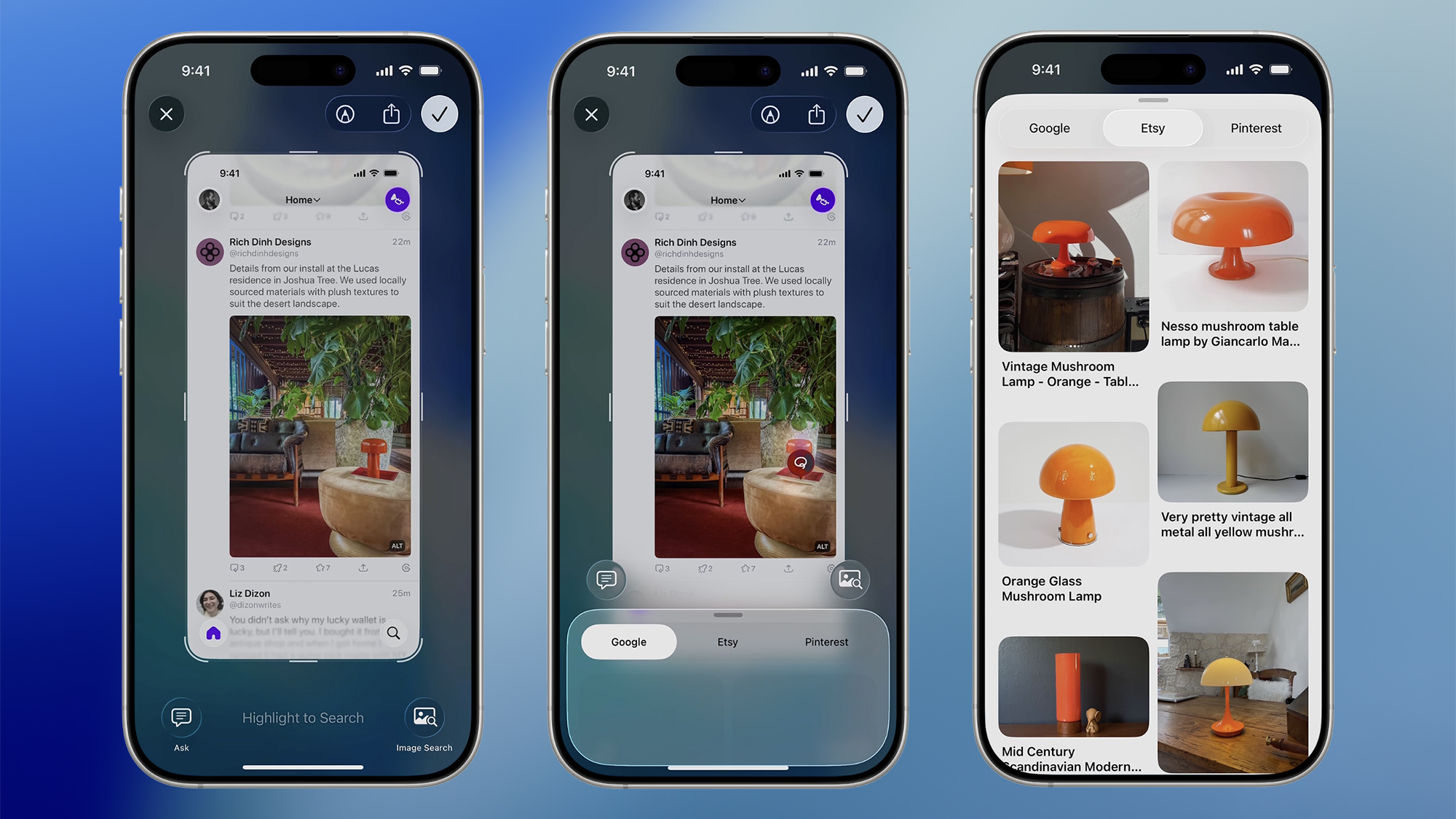

Mastering Visual Intelligence Basic Features

Available on iPhone 15 Pro and later models, Visual Intelligence is activated by pressing and holding the Camera Control button. Key features as of iOS 26:

📸 Apple Vision Pro - Apple

Object and Place Recognition

- Plant & Animal Identification: Point your camera at plants or animals to instantly learn their species names and characteristics

- Landmark Recognition: Point at buildings or tourist attractions to display history, information, and operating hours

- QR Codes & Barcodes: Automatically recognizes and suggests relevant actions (opening links, saving contacts, etc.)

- Text Recognition: Capture, translate, copy, or search any text on screen

📸 Introducing Apple Vision Pro: Apple's first spatial computer ...

Cross-App Integration Actions

The true strength of Visual Intelligence lies in cross-app integration that goes beyond simple recognition. Point at a menu and it connects with Yelp/Maps to instantly show reviews, scan an event poster and it automatically adds the schedule to your calendar, and snap a business card to save it as a contact.

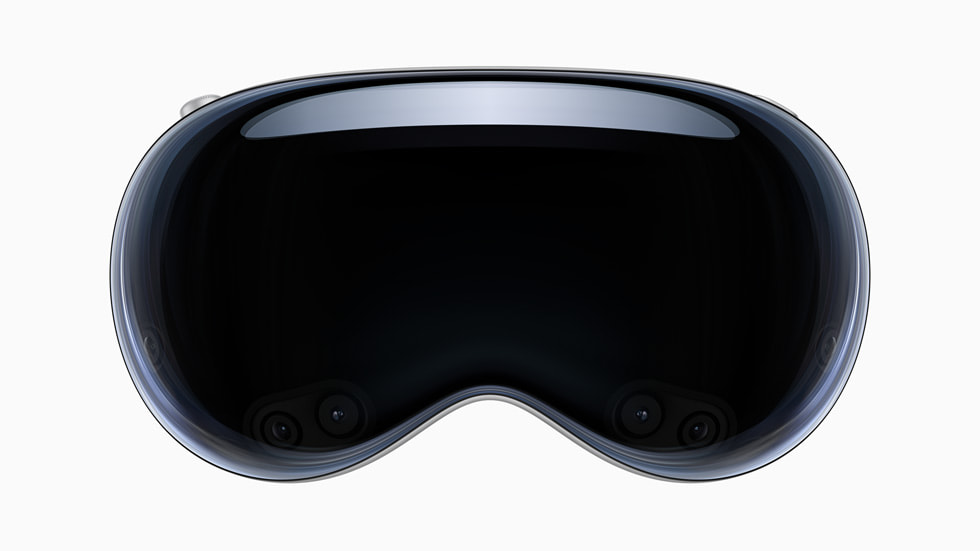

The Next Step in Wearable AI — The Future of Visual Intelligence

According to Bloomberg's Mark Gurman reporting and Apple internal documents, Tim Cook has explicitly designated Visual Intelligence as the core of Apple's wearable strategy. How will it be applied to Apple's wearable lineup scheduled for 2026?

Apple Glasses (Smart Glasses)

Apple smart glasses, currently known to be in development, combine Visual Intelligence with an always-on camera. Expected features:

- Real-time Translation: Instantly translate foreign language signs and menus directly on the lenses

- Object Recognition: Overlay information about objects in your field of view on the lenses

- 1080p Video Recording: Hands-free, gaze-based recording

- Navigation: Display routes with arrows in the direction you're walking

Apple Watch Ultra 3

The Apple Watch Ultra 3, expected to feature a camera module, brings Visual Intelligence to your wrist. You can point the camera at food to instantly check calorie and ingredient information, or recognize your surroundings during exercise to determine your location even without GPS.

AirPods Pro 3

The fusion of audio AI and visual AI. Analyzes ambient sounds to understand context, and combined with paired iPhone's Visual Intelligence, provides real-time "audio descriptions of what you're looking at." It's also gaining attention as an accessibility feature for the visually impaired.

iPhone 18 Pro: Visual Intelligence 2nd Generation

The iPhone 18 Pro, scheduled for release in September 2026, is expected to feature the 2nd generation of Visual Intelligence. Key expected improvements:

- Real-time Video Analysis: Understand moving video in real-time, going beyond static images

- Multi-Object Tracking: Track multiple objects simultaneously and provide information for each

- Shopping Integration: Point your camera at products you like to instantly get price comparisons and purchase links

- Deep Apple Intelligence Integration: Connect with personal calendar, contacts, and messages for more contextual suggestions

Visual Intelligence API for Developers

Apple has opened Visual Intelligence to third-party apps in iOS 26. Through the Vision Pro framework and Core ML, developers can integrate Visual Intelligence features into their apps:

import Vision

import CoreML

// Visual Intelligence request setup

let request = VNRecognizeObjectsRequest { request, error in

guard let results = request.results as? [VNRecognizedObjectObservation] else { return }

for observation in results {

let topLabel = observation.labels.first

print("Recognized object: \(topLabel?.identifier ?? ""), confidence: \(topLabel?.confidence ?? 0)")

}

}

// Image processing

let handler = VNImageRequestHandler(cgImage: capturedImage, options: [:])

try handler.perform([request])

Visual Intelligence vs Competing Products

| Feature | Apple Visual Intelligence | Google Lens | Samsung Visual Assist |

|---|---|---|---|

| On-Device Processing | ✅ Apple Silicon | ⚠️ Cloud-Centric | ⚠️ Hybrid |

| Personal Data Integration | ✅ Calendar·Contacts | ⚠️ Google Account | ⚠️ Samsung Account |

| Privacy | ✅ On-Device First | ❌ Server Transmission | ⚠️ Optional |

| Wearable Expansion | ✅ Planned | ⚠️ Limited | ⚠️ Galaxy Ring |

| Cross-App Actions | ✅ iOS App Integration | ✅ Google Apps | ⚠️ Limited |

Privacy — Apple's Key Differentiator

One of the most important features of Visual Intelligence is the on-device processing first principle. Most recognition processing is performed on Apple Silicon chips (Neural Engine) and is not transmitted to servers. Even when cloud processing is required, Apple's Private Cloud Compute technology ensures that processed data is not stored on Apple servers and is immediately deleted.

This is fundamentally different from Google Lens, which uploads images to servers for analysis. Since information about your surroundings doesn't accumulate on corporate servers, it has high potential for use in enterprise, healthcare, and security environments.

Real-Life Usage Tips

- Restaurant Menus: Snap a photo of foreign language menus to get Korean translation + Yelp reviews simultaneously

- Prescriptions: Scan medicine packaging or prescriptions to instantly check usage instructions and side effects (for medical information reference)

- Wine Labels: Snap a wine label to get reviews and food pairing recommendations

- Event Posters: Snap concert or exhibition posters to get booking links + calendar registration

- Plant Care: Snap your house plants to get watering schedules, sunlight conditions, and pest/disease diagnosis

Outlook Beyond 2026

Visual Intelligence is one of the most important positioning elements in Apple's AI strategy. It's the core technology that realizes the vision of "AI responding instantly to everything you see without needing to open an app to use AI." In the second half of 2026, with the launch of Apple Glasses, we'll experience a new computing paradigm where visual AI operates in an always-on state.

If you're using an iPhone, try long-pressing the Camera Control button now to experience Visual Intelligence. The future of AI is already in your hands.

댓글

댓글 쓰기